[We are experimenting with Twitter threads.]

We’re always reading about how the pandemic has created a new emphasis on preprints, so it stands to reason that non-reviewed preposts would now have a place in blogs. Maybe then I’ll “publish” some of the half-baked posts languishing on draft in errorstatistics.com. I’ll update or replace this prepost after reviewing.

The Booster wars (more…)

The tenth meeting of our Phil Stat Forum*:

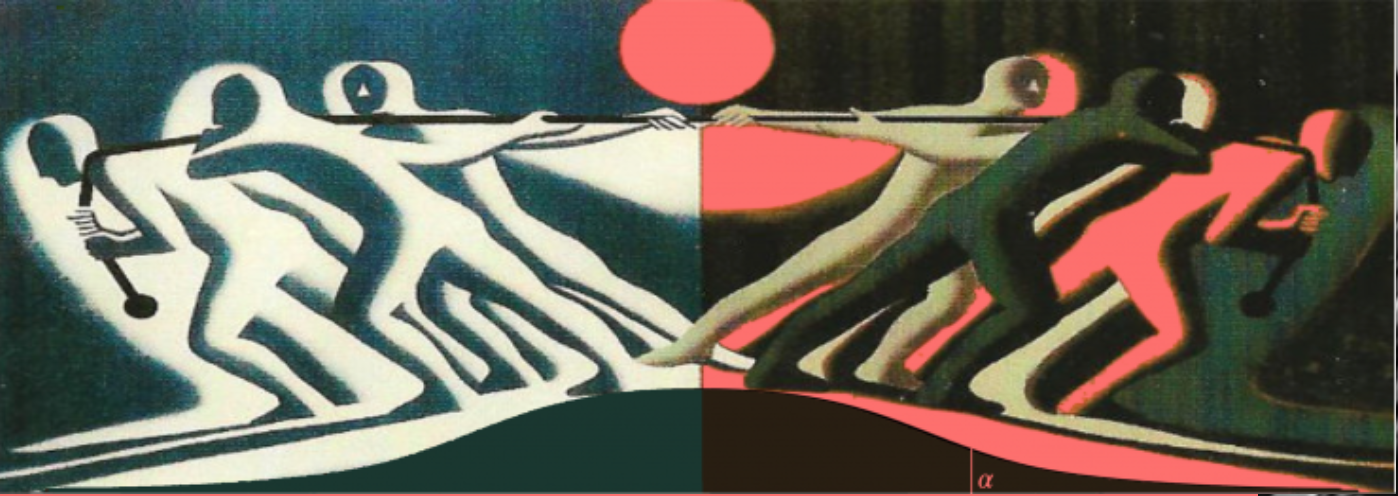

The Statistics Wars

24 June 2021

TIME: 15:00-16:45 (London); 10:00-11:45 (New York, EST)

For information about the Phil Stat Wars forum and how to join, click on this link .

“Have Covid-19 lockdowns led to an increase in domestic violence? Drawing inferences from police administrative data”

Katrin Hohl (more…)

The ninth meeting of our Phil Stat Forum*:

The Statistics Wars

20 May 2021

TIME: 15:00-16:45 (London); 10:00-11:45 (New York, EST)

For information about the Phil Stat Wars forum and how to join, click on this link .

“Objective Bayesianism from a philosophical perspective”

Jon Williamson (more…)

The eighth meeting of our Phil Stat Forum*:

The Statistics Wars

22 April 2021

TIME: 15:00-16:45 (London); 10:00-11:45 (New York, EST)

For information about the Phil Stat Wars forum and how to join, click on this link .

“How an information metric could bring truce to the statistics wars “

Daniele Fanelli (more…)

The seventh meeting of our Phil Stat Forum*:

The Statistics Wars

25 March, 2021

TIME: 15:00-16:45 (London); 11:00-12:45 (New York, NOTE TIME CHANGE)

For information about the Phil Stat Wars forum and how to join, click on this link .

“How should applied science journal editors deal with statistical controversies? “

Mark Burgman (more…)

The sixth meeting of our Phil Stat Forum*:

The Statistics Wars

18 February, 2021

TIME: 15:00-16:45 (London); 10-11:45 a.m. (New York, EST)

For information about the Phil Stat Wars forum and how to join, click on this link .

.

“ Testing with Models that Are Not True “

Christian Hennig (more…)

The fifth meeting of our Phil Stat Forum*:

The Statistics Wars

28 January, 2021

TIME: 15:00-16:45 (London); 10-11:45 a.m. (New York, EST)

“How c an we im prove replicability?”

Alexander Bird (more…)

The fourth meeting of our New Phil Stat Forum*:

The Statistics Wars and Their Casualties

January 7, 16:00 – 17:30 (London time) 11 am-12:30 pm (New York, ET)** **note time modification and date change

Putting the Brakes on the Breakthrough,

or “How I used simple logic to uncover a flaw in a controversial 60-year old ‘theorem’ in statistical foundations”

Deborah G. Mayo

.

(more…)

The third meeting of our New Phil Stat Forum*:

The Statistics Wars

November 19: 15:00 – 16:45 (London time)

“Randomisation and Control in the Age of Coronavirus “

Stephen Senn

(more…)

VIDEO

National Institute of Statistical Sciences (NISS): The Statistics Debate (Video )

Recent Comments