The Statistics Wars and Their Casualties Videos & Slides from Sessions 1 & 2

Below are the videos and slides from the 6 talks from Session 1 and Session 2 of our workshop The Statistics Wars and Their Casualties held on September 22 & 23, 2022. Session 1 speakers were: Deborah Mayo (Virginia Tech), Richard Morey (Cardiff University), Stephen Senn (Edinburgh, Scotland). Session 2 speakers were: Daniël Lakens (Eindhoven University of Technology), Christian Hennig (University of Bologna), Yoav Benjamini (Tel Aviv University). Abstracts can be found here and the schedule here. Some participant related publications are on this page.

The final 2 sessions of our online workshop (Sessions 3 and 4) were held on Thursdays, Dec 1 and Dec 8, 2022 from 1500-1815 (London time) and 10am-1:15pm (New York City time), see list of speakers and link to videos at the end of this post.

SESSION 1

Brief Intro to Session 1 by David Hand (Imperial College)

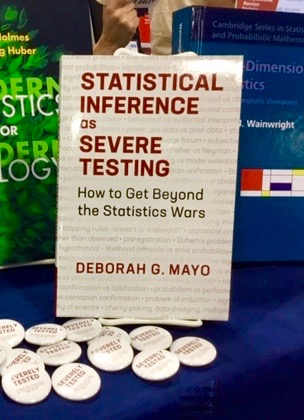

Deborah Mayo (Virginia Tech):

The Statistics Wars and Their Casualties

Richard Morey (Cardiff University)

Bayes factors, p values, and the replication crisis

Slide show is posted on his webpage here.

Stephen Senn (Edinburgh)

The replication crisis: are P-values the problem and are Bayes factors the solution?

Session 1 Discussion

SESSION 2

[Brief Intro to Session 2 by Stephen Senn (Edinburgh)]

Daniël Lakens (Eindhoven University of Technology)

The role of background assumptions in severity appraisal

Christian Hennig (University of Bologna)

On the interpretation of the mathematical characteristics of statistical tests

Yoav Benjamini (Tel Aviv University)

The two statistical cornerstones of replicability: addressing selective inference and irrelevant variability

Session 2 Discussion

SESSIONS #3 & #4

The videos and slides from the 7 talks from Session 3 and Session 4 of our workshop The Statistics Wars and Their Casualties held on December 1 & 8, 2022 can be found on this post. Session 3 speakers were: Daniele Fanelli (London School of Economics and Political Science), Stephan Guttinger (University of Exeter), and David Hand (Imperial College London). Session 4 speakers were: Jon Williamson (University of Kent), Margherita Harris (London School of Economics and Political Science), Aris Spanos (Virginia Tech), and Uri Simonsohn (Esade Ramon Llull University). Abstracts can be found here. In addition to the talks, you’ll find (1) a Recap of recaps at the beginning of Session 3 that provides a summary of Sessions 1 & 2, and (2) Mayo’s (5 minute) introduction to the final discussion: “Where do we go from here (Part ii)”at the end of Session 4.

THE STATISTICS WARS AND THEIR CASUALTIES VIDEOS & SLIDES FROM SESSIONS 3 & 4

Below are the videos and slides from the 7 talks from Session 3 and Session 4 of our workshop The Statistics Wars and Their Casualties held on December 1 & 8, 2022. Session 3 speakers were: Daniele Fanelli (London School of Economics and Political Science), Stephan Guttinger (University of Exeter), and David Hand (Imperial College London). Session 4 speakers were: Jon Williamson (University of Kent), Margherita Harris (London School of Economics and Political Science), Aris Spanos (Virginia Tech), and Uri Simonsohn (Esade Ramon Llull University). Abstracts can be found here. In addition to the talks, you’ll find (1) a Recap of recaps at the beginning of Session 3 that provides a summary of Sessions 1 & 2, and (2) Mayo’s (5 minute) introduction to the final discussion: “Where do we go from here (Part ii)”at the end of Session 4.

The videos & slides from Sessions 1 & 2 can be found on this post.

Readers are welcome to use the comments to this blog post to make constructive comments or to ask questions of the speakers. If you’re asking a question, indicate to which speaker(s) it is directed. We will leave it to speakers to respond. Thank you!

SESSION 3

Recap of recaps summary of Sessions 1 & 2:

Introduction to Session: Daniël Lakens (Eindhoven University of Technology)

Daniele Fanelli (London School of Economics and Political Science)

The neglected importance of complexity in statistics and Metascience

Stephan Guttinger (University of Exeter)

What are questionable research practices?

David Hand (Imperial College London)

What’s the question?

Discussion (Session 3): (a) Panel discussion of speakers; (b) general audience discussion; (c) “Where do we go from here (Part i)” participant discussion.

SESSION 4

Introduction to Session 4: Deborah Mayo (Virginia Tech)

Jon Williamson (University of Kent)

Causal inference is not statistical inference

Margherita Harris (London School of Economics and Political Science)

On Severity, the Weight of Evidence, and the Relationship Between the Two

Aris Spanos (Virginia Tech)

Revisiting the Two Cultures in Statistical Modeling and Inference as they relate to the Statistics Wars and Their Potential Casualties

Uri Simonsohn (Esade Ramon Llull University)

Mathematically Elegant Answers to Research Questions No One is Asking (meta-analysis, random effects models, and Bayes factors)

Where Should Stat Activists Go From Here? Deborah Mayo (Virginia Tech):

Discussion: (a) Panel discussions; (b) General audience discussion; (c) “Where do we go from here (Part ii)” participants and audience.

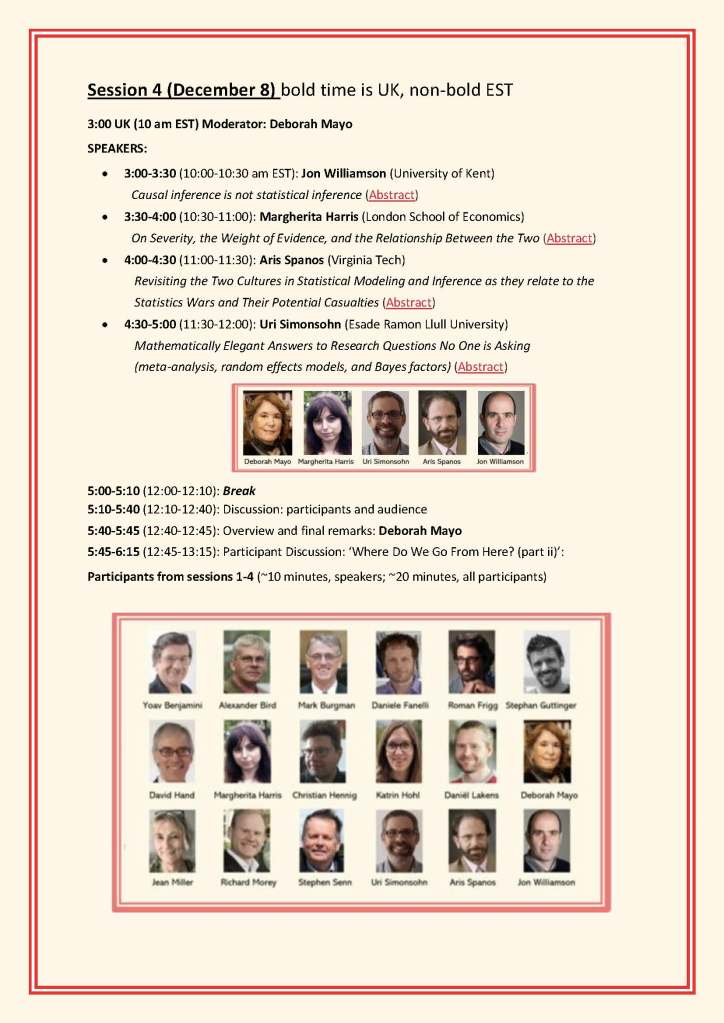

Final session: The Statistics Wars and Their Casualties: 8 December, Session 4

Thursday, December 8 will be the Final Session (Session 4) of my workshop, The Statistics Wars and Their Casualties. There will be 4 new speakers. It’s not too late to register:

At the end of this post is “A recap of recaps”, the short video we showed at the beginning of Session 3 last week that summarizes the presentations from Sessions 1 & 2 back in September 22-23.

WORKSHOP

The Statistics Wars

and Their Casualties

1 December and 8 December 2022

Sessions #3 and #4

15:00-18:15 pm London Time/10:00am-1:15pm EST

ONLINE

(London School of Economics, CPNSS)

registration form

For slides and videos of Sessions #1 and #2: see this workshop post

1 December

Session 3 (Moderator: Daniël Lakens, Eindhoven University of Technology)

OPENING

- “What Happened So Far”: A medley (20 min) of recaps from Sessions 1 & 2: Deborah Mayo (Virginia Tech), Richard Morey (Cardiff), Stephen Senn (Edinburgh), Daniël Lakens (Eindhoven), Christian Hennig (Bologna) & Yoav Benjamini (Tel Aviv).

SPEAKERS

- Daniele Fanelli (London School of Economics and Political Science) The neglected importance of complexity in statistics and Metascience (Abstract)

- Stephan Guttinger (University of Exeter) What are questionable research practices? (Abstract)

- David J. Hand (Imperial College, London) What’s the question? (Abstract)

DISCUSSIONS:

- Closing Panel: “Where Should Stat Activists Go From Here (Part i)?”: Yoav Benjamini, Daniele Fanelli, Stephan Guttinger, David Hand, Christian Hennig, Daniël Lakens, Deborah Mayo, Richard Morey, Stephen Senn

8 December

Session 4 (Moderator: Deborah Mayo, Virginia Tech)

SPEAKERS

- Jon Williamson (University of Kent) Causal inference is not statistical inference (Abstract)

- Margherita Harris (London School of Economics and Political Science) On Severity, the Weight of Evidence, and the Relationship Between the Two (Abstract)

- Aris Spanos (Virginia Tech) Revisiting the Two Cultures in Statistical Modeling and Inference as they relate to the Statistics Wars and Their Potential Casualties (Abstract)

- Uri Simonsohn (Esade Ramon Llull University) Mathematically Elegant Answers to Research Questions No One is Asking (meta-analysis, random effects models, and Bayes factors) (Abstract)

DISCUSSIONS;

- Closing Panel: “Where Should Stat Activists Go From Here (Part ii)?”: Workshop Participants: Yoav Benjamini, Alexander Bird, Mark Burgman, Daniele Fanelli, Stephan Guttinger, David Hand, Margherita Harris, Christian Hennig, Daniël Lakens, Deborah Mayo, Richard Morey, Stephen Senn, Uri Simonsohn, Aris Spanos, Jon Williamson

**********************************************************************

WORKSHOP DESCRIPTION: While the field of statistics has a long history of passionate foundational controversy, the last decade has, in many ways, been the most dramatic. Misuses of statistics, biasing selection effects, and high-powered methods of big-data analysis, have helped to make it easy to find impressive-looking but spurious results that fail to replicate. As the crisis of replication has spread beyond psychology and social sciences to biomedicine, genomics, machine learning and other fields, the need for critical appraisal of proposed reforms is growing. Many are welcome (transparency about data, eschewing mechanical uses of statistics); some are quite radical. The experts do not agree on the best ways to promote trustworthy results, and these disagreements often reflect philosophical battles–old and new– about the nature of inductive-statistical inference and the roles of probability in statistical inference and modeling. Intermingled in the controversies about evidence are competing social, political, and economic values. If statistical consumers are unaware of assumptions behind rival evidence-policy reforms, they cannot scrutinize the consequences that affect them. What is at stake is a critical standpoint that we may increasingly be in danger of losing. Critically reflecting on proposed reforms and changing standards requires insights from statisticians, philosophers of science, psychologists, journal editors, economists and practitioners from across the natural and social sciences. This workshop will bring together these interdisciplinary insights–from speakers as well as attendees.

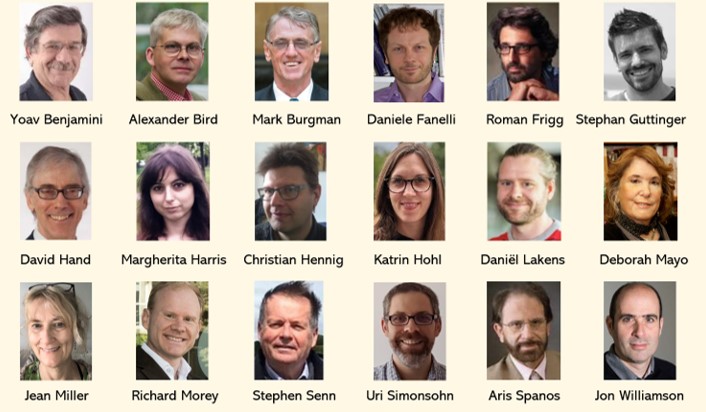

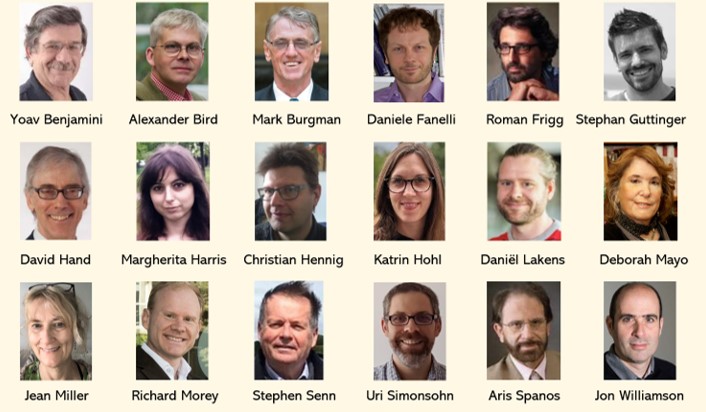

Speakers/Panellists:

Yoav Benjamini (Tel Aviv University), Alexander Bird (University of Cambridge), Mark Burgman (Imperial College London), Daniele Fanelli (London School of Economics and Political Science), Roman Frigg (London School of Economics and Political Science), Stephan Guttinger (University of Exeter), David Hand (Imperial College London), Margherita Harris (London School of Economics and Political Science), Christian Hennig (University of Bologna), Daniël Lakens (Eindhoven University of Technology), Deborah Mayo (Virginia Tech), Richard Morey (Cardiff University), Stephen Senn (Edinburgh, Scotland), Uri Simonsohn (Esade Ramon Llull University), Aris Spanos (Virginia Tech), Jon Williamson (University of Kent)

Sponsors/Affiliations:

The Foundation for the Study of Experimental Reasoning, Reliability, and the Objectivity and Rationality of Science (E.R.R.O.R.S.); Centre for Philosophy of Natural and Social Science (CPNSS), London School of Economics; Virginia Tech Department of Philosophy

Organizers: D. Mayo, R. Frigg and M. Harris

Logistician (chief logistics and contact person): Jean Miller

Executive Planning Committee: Y. Benjamini, D. Hand, D. Lakens, S. Senn

To register for the workshop,

please fill out the registration form here.

The Statistics Wars & Their Casualties: Slides from Sessions 1 & 2

The Statistics Wars and Their Casualties

Session 1 (September 22, 2022)

Deborah Mayo (Virginia Tech)

The Statistics Wars and Their Casualties (Abstract)

Richard Morey (Cardiff University)

Bayes factors, p values, and the replication crisis (Abstract)

Slide show is posted on his webpage here.

Stephen Senn (Edinburgh Scottland): The replication crisis: are P-values the problem and are Bayes factors the solution? (Abstract) (more…)

WORKSHOP

The Statistics Wars

and Their Casualties

22-23 September 2022

15:00-18:00 pm London Time*

ONLINE

(London School of Economics, CPNSS)

To register for the workshop,

please fill out the registration form here.

*These will be sessions 1 & 2, there will be two more

online sessions (3 & 4) on December 1 & 8.

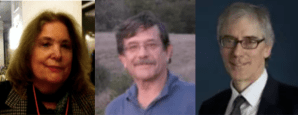

January 11: Phil Stat (Remote) Forum

Special Session of the (remote)

Phil Stat Forum:

11 January 2022

“Statistical Significance Test Anxiety”

TIME: 15:00-17:00 (London, GMT); 10:00-12:00 (EST)

Presenters: Deborah Mayo (Virginia Tech) &

Yoav Benjamini (Tel Aviv University)

Moderator: David Hand (Imperial College London)

Deborah Mayo Yoav Benjamini David Hand

Focus of the Session:

March 25 “How should applied science journal editors deal with statistical controversies?” (Mark Burgman)

The seventh meeting of our Phil Stat Forum*:

The Statistics Wars

and Their Casualties

25 March, 2021

TIME: 15:00-16:45 (London); 11:00-12:45 (New York, NOTE TIME CHANGE)

For information about the Phil Stat Wars forum and how to join, click on this link.

“How should applied science journal editors deal with statistical controversies?“

Mark Burgman (more…)

The Statistics Debate

October 15, 2020: Noon – 2 pm ET

(17-19:00 London Time)

Website: https://www.niss.org/events/statistics-debate

(Online webinar debate, free but must register to attend on website above)

Debate Host: Dan Jeske (University of California, Riverside)

Participants:

Jim Berger (Duke University)

Deborah Mayo (Virginia Tech)

David Trafimow (New Mexico State University)

Where do you stand?

- Given the issues surrounding the misuses and abuse of p-values, do you think p-values should be used?

- Do you think the use of estimation and confidence intervals eliminates the need for hypothesis tests?

- Bayes Factors – are you for or against?

- How should we address the reproducibility crisis?

If you are intrigued by these questions and have an interest in how these questions might be answered – one way of the other – then this is the event for you!

Want to get a sense of the thinking behind the practicality (or not) of various statistical approaches? Interested in hearing both sides of the story – during the same session!?

This event will be held in a debate type of format. The participants will be given selected questions ahead of time, so they have a chance to think about their responses, but this is intended to be much less of a presentation and more of a give and take between the debaters.

So – let’s have fun with this! The best way to find out what happens is to register and attend!

September 24: Bayes factors from all sides: who’s worried, who’s not, and why (R. Morey)

The second meeting of our New Phil Stat Forum*:

The Statistics Wars

and Their Casualties

September 24: 15:00 – 16:45 (London time)

10-11:45 am (New York, EDT)

“Bayes Factors from all sides:

who’s worried, who’s not, and why”

Richard Morey

. (more…)

. (more…)

Recent Comments