The sixth meeting of our Phil Stat Forum*:

The Statistics Wars

and Their Casualties

18 February, 2021

TIME: 15:00-16:45 (London); 10-11:45 a.m. (New York, EST)

For information about the Phil Stat Wars forum and how to join, click on this link.

.

“Testing with Models that Are Not True“

Christian Hennig

ABSTRACT:The starting point of my presentation is the apparently popular idea that in order to do hypothesis testing (and more generally frequentist model-based inference) we need to believe that the model is true, and the model assumptions need to be fulfilled. I will argue that this is a misconception. Models are, by their very nature, not “true” in reality. Mathematical results secure favourable characteristics of inference in an artificial model world in which the model assumptions are fulfilled. For using a model in reality we need to ask what happens if the model is violated in a “realistic” way. One key approach is to model a situation in which certain model assumptions of, e.g., the model-based test that we want to apply, are violated, in order to find out what happens then. This, somewhat inconveniently, depends strongly on what we assume, how the model assumptions are violated, whether we make an effort to check them, how we do that, and what alternative actions we take if we find them wanting. I will discuss what we know and what we can’t know regarding the appropriateness of the models that we “assume”, and how to interpret them appropriately, including new results on conditions for model assumption checking to work well, and on untestable assumptions.

Christian Hennig is a Professor in the Department of Statistical Sciences,“Paolo Fortunati”, at the University of Bologna since November 2018. Hennig’s research interests are cluster analysis, multivariate data analysis incl. classification and data visualisation, robust statistics, foundations and philosophy of statistics, statistical modelling and applications. He was Senior Lecturer in Statistics at UCL, London, 2005- 2018. Hennig studied Mathematics in Hamburg and Statistics in Dortmund. He was promoted at the University of Hamburg in 1997 and habilitated in 2005. In 2017 Hennig got his Italian habilitation. After having obtained his PhD, he worked as research assistant and lecturer at the University of Hamburg and ETH Zuerich.

Readings:

M. Iqbal Shamsudheen, Christian Hennig(2020) Should we test the model assumptions before running a model-based test? (PDF)

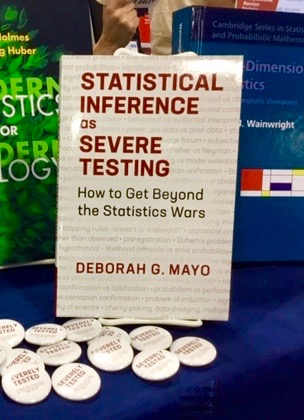

Mayo D. (2018). “Section 4.8 All Models Are False” excerpt from Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars, CUP. (pp. 296-301)

Slides and Video Links:

Christian Hennig’s slides: Testing In Models That Are Not True

Christian Hennig Presentation

- Link to paste in browser: https://phil-stat-wars.com/wp-content/uploads/2021/02/hennig_presentation.mp4

Christian Hennig Discussion

- Link to paste in browser: https://phil-stat-wars.com/wp-content/uploads/2021/02/hennig_discussion.mp4

Mayo’s Memos: Any info or events that arise that seem relevant to share with y’all before the meeting. Please check back closer to the meeting day.

*Meeting 14 of our the general Phil Stat series which began with the LSE Seminar PH500 on May 21

All: Please write any questions you might have for Professor Hennig in the comments to this blog and he or we will reply to them.

Thank you so much for your interest!

Hi Christian

Thank you for a very thought-provoking talk. I was unable to pose a questions at the time because of intermittent internet problems (which the broadband provider is now trying to fix).

In Decision Analysis, assumptions are tested with sensitivity analyses. My old stats text-book did this e.g. by providing worked examples of t-Tests by showing the effect on P-values by assuming equal or unequal variance when the data did or did not show unequal variances.

Do you think that the other model assumptions could and should be checked by seeing the effect of changing the assumptions in the same way?

Hi Huw,

this is in line with what I was presenting, yes. Not all problematic issues are shown clearly by the data though. The equal/unequal variances case is maybe about the easiest thing to look at, for which reason much of the early literature I have cited treats this. Some conclude that the better direction to take there is to never assume equal variances, because this hardly ever loses substantial power.

Best wishes,

Christian

PS: The remark on not assuming variances is for the two-sample case, with more than two it can be more complicated.

Thank you. I agree of course with your points about my example regarding variance.

However what do you think of the idea that ideally all assumptions should be tested routinely by performing sensitivity analyses for statistical calculations? This might mean giving a range for estimates such as P-values and confidence intervals.

Is it the hassle of doing it or the perplexity it might cause that prevents this or do you think that the calculations would be insensitive to different assumptions anyway?

Dear Huw,

I generally think that sensitivity analysis of this kind is worthwhile, however it is hard to make a recommendation for routine use. The problem is that the range of possibilities is vast, actually so vast that it can never be fully covered, and there will always be possibilities that cannot be ruled out by the data and that can have any kind of undesired consequences, when allowing for general enough dependence or non-identity structures. I have a partly optimistic and partly pessimistic message about this. The optimistic part is that we can do quite a bit more than what is currently done; that far I’d concur with your approach. The pessimistic part is that we have to live with the fact that we have to rule out some things that cannot be ruled out by the data in order to get anywhere. If we do sensitivity analysis too well, we will just find that nothing can be said (which of course to some extent is honest, or rather, it nails down what kind of stuff we need to exclude for other reasons than that the data contradict it). That’s one issue, here is another important one that also came up in my talk.

The parameter to be estimated and the p-value are defined within the assumed model. If we start to ask, “what happens if the assumed model does not hold”, we have to decide what exactly we want to estimate in the model that we try out instead. This is, as I hope to have shown, not a trivial matter. It rather requires subtle judgment that can hardly be prescribed in a routine way. In fact I prefer to define p-values with respect to the assumed nominal model. In this way, a p-value is an observable quantity about the relation between the data and the assumed model, regardless of how the data were actually generated. I don’t like the idea of an unobservable “true” p-value (which is required to define “ranges” from sensitivity analysis) as this would require a rigorous definition of the H0 in the general space of (possible) distributions. This is, as already stated above, far from trivial, and cannot be done, in my view, in a general way. My way of looking at it is rather to stick to the observed and well defined p-value and then, when doing sensitivity analyses, to specify what the error probabilities are based on this p-value would be if the underlying distribution were different (which can be done conditionally on whether this is assigned to the “substantial H0”, the substantial alternative, or none of them, so a final decision is not required).

Best wishes,

Christian

Thinking about this a bit longer, I actually think, in the framework of a sensitivity analysis, it can make sense to ask what the p-value of the same test would have been with another model as null hypothesis (as long as this is interpreted as also formalising the “substantial H0”). I’d probably still not specify an interval for the p-value and would still use the terminology so that it is not implied that there could be any “true” p-value apart from the one computed using the nominal null model. A key issue is to do an interpret these things avoiding to pretend that the results could in any way be complete or exhaustive.

Dear Christian

Thank you again for explain the issues so clearly. My practical experience is not that of a statistician but as a doctor trying to make predictions. I think that when I arrive at probabilities in medical situations, I also use make all sorts of un-testable assumptions too, some of which are conscious but many subconscious and difficult to identify in order to make sensitivity analyses. I have also been aware since I was a student that I calibrate my probabilities mentally and informally by noting to what extent the average of a group of probabilities correspond to the overall frequency of correct predictions. I used to teach my students to do this by making a prediction, writing down the probability of a specified outcome in a little notebook and then recording the subsequent outcome of each prediction. They found it very illuminating and it resulted in an improvement in ‘clinical judgement’ so that they had less of a tendency to be over or under-confident.

I wonder therefore whether the best way forward to assessing the overall validity of multiple statistical assumptions would be to try to calibrate them too. I suppose the way to do this would be to link a group of P values to the frequency of replication. I see the medical analogy of P values not in terms of sensitivity and specificity (which is a model peculiar to screening) but in the way we assess the probability of a single test result exceeding a diagnostic threshold if it were repeated a large number (or infinite) of times (i.e. the probability of that diagnosis conditional on a single test result). This is based on the test’s precision in terms of one or 1.96 standard deviations which is analogous to one SEM or a 95% confidence interval for a single study.

This of course means assuming uniform priors, which I argue in a PLOS paper (see https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0212302) is an essential condition of the random screening model, which is fundamental to the assessment of test precision, diagnostic probabilities, confidence intervals and P values. In the above paper I argue that a Bayesian prior is essentially the likelihood distribution of a prior study (real or imagined) that is combined with the likelihood distribution of a current study by assuming uniform priors and statistical independence in what is essentially another approach to meta-analysis (see Figure 6 and the preceding section 3.8. entitled ‘Combining probability distributions using Bayes’ rule’ in the above PLOS paper).

From this medical analogy, I argue that if we have a study result with a P value of 0.025 then the probability of replication by repeating the study with a result at least greater than the null hypothesis with an INFINITE number of observations (when all assumptions are satisfied that allow us to use a random sampling model) is 1-0.025 = 0.975 (replication A). The probability of replication at least greater than the null hypothesis with the SAME number of observations as in the original study is 0.917 (replication B). However, the probability of replicating the study with the same number of observations and with a P value of 0.025 again is 0.283 (replication C). The calculations for this are shown here: https://osf.io/j2wq8/ .

Therefore if we conduct replication C for different studies in a ‘survey’ of such different studies and get a 28.3 % success rate overall this would be consistent with the assumptions being reasonably valid (at least on average). Failing this, the original P values and probabilities of replication A (i.e. 1-P) could be calibrated. The first P value, number of observations initially, the number of observations subsequently the subsequent P value could vary from study to study so that the probabilities of replication would vary. The essential point is that the averages of all the probabilities of replication in the survey should match the overall probabilities of replication.

What do you think?

With best wishes

Huw

PS. Apologies for the above typos and unnoticed predictive text changes. In particular ‘essential condition of the random screening model’ should have been ‘essential condition of the random SAMPLING model’.

Dear Huw,

well, this leads a bit away from the topic of my presentation and would be worth its own presentation and discussion. Personally I’d define “replication” rather based on confidence intervals than on p-values, but I can’t say that I have thought a lot about this. Surely, if we have a number of studies for which it makes sense to assume the same model, we are in a much better situation than the one I was talking about, which was about diagnosing model assumptions and testing based on the same data. (If one wants to test whether the same model is reasonable for two studies rather than just assuming it, this is closer again to what we were investigating.)

What I can say is that one could apply the approach I was discussing again to a “method M for checking successful replication based on p-values”, so accepting whatever concept of replication you use, if you define a method M that decides in a data-based way whether study S was successfully replicated by another study T, one can analyse by simulation or if possible theory replication probabilities if M is carried out implicitly, say for p-value computation, based on model assumptions P, looking at a situation where in fact model Q is in effect.

Best wishes,

Christian

Dear Christian

Thank you again for identifying the issues. Perhaps I should explain how I see the relationship between P values and confidence limits. If one of the 95% confidence limits corresponds to the null hypothesis then P one sided = 0.025. Therefore in general I regard the null hypothesis as one of the 100(1-2P)% confidence limits (a symmetrical distribution and that all the other assumptions are valid). I therefore regard a diagnostic threshold as being analogous to a null hypothesis but applying SDs to diagnosis and SEMs for scientific hypotheses.

In terms of hypothesis testing, I estimate the probability that the hypothesis will be confirmed (eg 0.975) by a single diagnostic test result or a scientific study result being replicated by falling on the same side of the threshold after repeating the observations an infinite number of times.

Does this make sense?

With best wishes

Huw

Dear Huw,

I might not be the best person to discuss this because I haven’t thought much about replication probabilities. What you propose may be fine but I haven’t invested much time for thinking it through. Personally I avoid the term “confirmation” of a hypothesis; surely no test can “confirm” the H0. It may be possible to give the term a different meaning that is less problematic, but I can well do without. This doesn’t need to be a problem with what you’re proposing.

Best wishes,

Christian

Dear Christian

Thank you for making an important point about “probability of a hypothesis being confirmed”. Instead, I should have said the probability of one ‘outcome’ or consequence of the hypothesis being confirmed (eg a probability of 0.975 that a RCT will show at least some difference after an infinite number of observations). This would be a theoretical probability based on assuming that a random sampling model is applicable. The hypothesis may also include other predictions and assumptions, each of which may have a theoretical probability of being valid or true.

This probable outcome of a RCT result after an infinite number of observations would support a new hypothesis that the treatment will also be successful during day to day care. This is what happens with diagnoses, one diagnosis always suggesting another. A ‘diagnostic criterion’ is a combination of findings that justifies using such a working diagnosis / hypothesis.

In clinical medicine we therefore don’t think in terms of disease criteria but in terms of more flexible ‘sufficient’ diagnostic criteria that only justify the use of ‘diagnoses’. It is epidemiologists that tend to think in terms of ‘disease’ and definitive gold standards (as opposed to diagnoses and their sufficient criteria). They do this in order to calculate sensitivities and specificities. However such gold standards that identify all those and only those with a disease rarely if ever exist as exemplified by Covid-19. Perhaps in epidemiology tests should be best used as ‘markers’ for tracking rises and falls in disease prevalence by assuming that the unknown sensitivities and specificities are constant (as happened for Covid-19).

I deal with all this in more detail in the next edition of the Oxford Handbook of Clinical Diagnosis. Perhaps it would be good to explore in one of these meetings the way terms such as diagnoses, theories and hypotheses are used in clinical practice, science and statistics.

With best wishes

Huw

My list of some casualties:

Here are some comments on casualties to get the discussion going.

A central focus of the statistics wars concern statistical significance testing, and some recommend banning them outright. A major casualty is that they’re a main way to check the statistical assumptions that are used across rival accounts.

Even Bayesians will turn to them— if they care to check their assumptions: Examples are George Box, Jim Berger, Andrew Gelman. (Described in some of the work by Hennig and Gelman.)

A second but related casualty comes out in the charge that since the statistical model used in testing is known to be wrong, there’s no point in testing a null hypothesis within that model. That’s dangerously confused and wrong. Remember the statistics debate that served as our October meeting? One of the debaters, editor (Basic and Applied Social Psychology), David Trafimow, gives this as the reason for banning p-value reports in published papers (though it’s OK to use them in the paper to get it accepted).

Here’s a quote from him:

“See, it’s tantamount to impossible that the model is correct, and so …you’re using the P-value to index evidence against a model that is already known to be wrong. … And so there’s no point in indexing evidence against it. “

But the statistical significance test is not testing whether the overall statistical model is wrong, and it is not indexing evidence against that model. It is only testing the null hypothesis (or test hypothesis) H0 within it. If the primary test is, say, about probability of success on each independent Bernouilli trial, it’s not also testing assumptions, say independence. Violated assumptions can lead to an incorrectly high, or an incorrectly low, P-value: P-values don’t “track” violated assumptions.

There are design based tests and model based tests In “design-based” tests, we look to experimental procedures, within our control, as with randomization, to get the assumptions to hold.

When we don’t have design-based assumptions, a standard way to check the assumptions is to get those checks to be independent of the unknowns in the primary test.

The secret of statistical significance tests that enable them to work so well is that running them only requires the distribution of the test statistic be known, at least approximately, under the assumption of H0 –whatever it is. It comes about as close to offering a direct falsification that one can hope for.

Given the assumptions the trials are like coin tossing (independent Bernouilli trials) I can deduce the probability of getting an even greater number of successes in a row, in n trials, than I observed, and so determine the p-value—where the null hypothesis is that the assumption of randomness holds.

What’s being done is often described as conditioning on the value of the sufficient statistic. (David Cox)

If you can’t do this, you’ve picked the wrong test statistic for the case at hand.

In some cases there is literally hold out data for checking but that’s different. The essence of the reasoning I’m talking about uses the “same” data but modelled differently.

It can be made out entirely informally. When the 2019 Eddington eclipse tests probed departures from the Newtonian predicted light deflection in testing the Einstein deflection, the tests—which were statistical– relied upon sufficient accuracy in the telescopes. Testing the distortions of the telescopes are done independently of the primary test of the deflection effect. In one famous case, thought to support Newton, it became clear after several months of analysis that the sun’s heat had systematically distorted the telescope mirror—the mirror had melted. No assumption about general relativity was required to infer that no adequate estimate of error for star positions was possible—only known star positions was required. That’s the essence of pinpointing blame for anomalies in primary testing.

If you can’t do this, you don’t have a test from which you can learn what’s intended—because you can’t distinguish if the observed phenomena is a real effect or noise in the data.

One other casualty is to declare: don’t test assumptions because you might do it badly. You might infer any of many possible ways to fix a model, upon finding evidence of violations.

Some of the examples Hennig gives are based on such a fallacy: replace the primary model by one where the error disappears. Maybe replace parameters in the model of gravity so it goes away—perhaps fiddle with the error term. Worse is if this depends on one of the rival gravity theories. That’s a disaster. There are many rival ways to save theories, and these error fixed hypotheses pass with very low severity. The proper inference only inferred you can’t use the data to reliably test the primary claim.

Looking for a decision routine on automatic pilot instead of piecemeal checking is very distant from good testing in science.