March 25 “How should applied science journal editors deal with statistical controversies?” (Mark Burgman)

The seventh meeting of our Phil Stat Forum*:

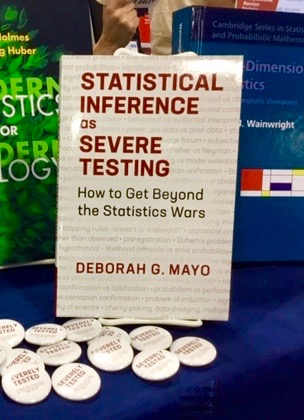

The Statistics Wars

and Their Casualties

25 March, 2021

TIME: 15:00-16:45 (London); 11:00-12:45 (New York, NOTE TIME CHANGE)

For information about the Phil Stat Wars forum and how to join, click on this link.

“How should applied science journal editors deal with statistical controversies?“

Mark Burgman (more…)

Recent Comments