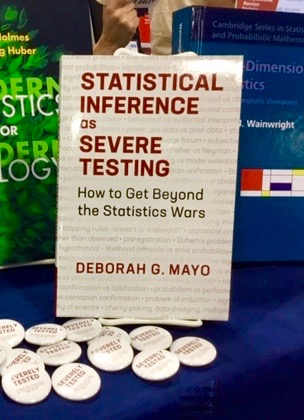

III. (June 4) Deeper Concepts: Confidence Intervals and Tests: Higgs’ Discovery:

Reading:

SIST: Excursion 3 Tour III

(Strongly) Recommended (as much as interests you) Excursion 3 Tour II:It’s the Methods Stupid: Howlers and Chestnuts of Tests

General Info Items:

-References: Captain’s Bibliography

–Souvenirs Meeting 3: N (Rule of Thumb for SEV), O(Interpreting Probable Flukes), [L (Beyond Incompatibilist Tunnels),M(Quicksand Takeaway]

-Summaries of 16 Tours (abstracts & keywords)

–Excerpts & Mementos on Error Statistics Philosophy Blog

Mayo Memos for Meeting 3:

-5/30 I blogged on nearly every topic in SIST as I was writing it at errorstatistics.com. Using the search on errorstatistics.com for a topic, you’ll often discover the development of ideas and discussion from readers. Reader discussion often saved me from blunders!

-A review essay of SIST by particle physicist Bob Cousins is relevant for the topics of meeting #3: Cousins, R. (2020). “Connections between statistical practice in elementary particle physics and the severity concept as discussed in Mayo’s Statistical Inference as Severe Testing” (Draft February 22, 2020), arXiv:2002.09713v1 [stat.OT].

Slides & Video Links for Meeting 3:

Slides: (PDF)

Video: https://videos.files.wordpress.com/Xmn6q0iz/lecture_3_ph500_trimmed_fmt1.ogv

To type Greek symbols in the comments, you can add the Greek Keyboard to your apple keyboard options thusly:

Go to:

Apple Menu

System preferences

Keyboard

click on “Input sources” tab

find “Greek” and press ‘+’ button at bottom to add it to your keyboard options.

This will allow you to type Greek symbols in comments, simply by switching your keyboard. (To switch between keyboards, on the top right hand side of your apple tool bar, between the battery and the day/time is the keyboard dropdown menu (it has a lambda for the greek, U+ for hex unicode, etc.).

In order to type X̅ (or other accents, etc.) you will need to add the Hex unicode (U+) keyboard. (This site has pictures/instructions for how to do it: https://www.webnots.com/how-to-use-unicode-hex-input-method-in-mac/.)

Once you have added the unicode keyboard, by pressing “option” and typing in the correct code you can get the math symbol you want.

So to type X̅, you would toggle on the U+ keyboard (hex unicode), then type x, and next while holding down the option key, type the following 4 numbers: 0305, which will then put a bar over the x you had typed in.

Subscripts using unicode:

To add a subscript to a letter, using unicode keyboard, hold down option key and type in 208x, where the x stands for the number of the subscript you want,

so for subscript 0: press option and type 2080

X̅₀

for subscript 1: press option and then type 2081

X̅₁

etc.

If you have questions, please email me at jemille6@vt.edu

The discussion in meeting 3 of the Higgs last week reminded me of some recent work by Sophie Ritson in which she analysed a recent episode at the LHC where they saw a bump at 750 GeV (at much less than 5 sigma). Some physicists got really excited about this because it “indicated” the presence of a particle which was “genuinely novel” in the sense that it was not predicted by any of our best theories and no one was looking for anything in that energy range. It was subsequently shown to be a “statistical fluctuation.”

I wondered how you would think about this episode. In particular with respect to ‘anomalies’ and ‘chance fluctuations’ we discussed last time. The striking thing about this example is that no theory predicts a bump in that energy (theories came out after the first ‘indications’ appeared) so it is not possible to make counterfactual claims about what the signal would be like under H1 before the testing took place. The best we could do is to say H0 would be what is predicted by our best theories of particle physics and H1 is the negation of H0. But even then, H1 was developed after the fact because no one was expecting to see such a bump in the data!

James:

I’m sorry that I wasn’t notified of your comment.

This (great !) example is discussed in the Higgs discussion in SIST (Excursion 3 Tour III) from last time. The bumps got smaller and disappeared. I consider this a great example of how HEP physicists falsify a potential avenue for pursuing BSM (Beyond Standard Model) physics. All have been falsified so far (to my knowledge). The negative results are quite informative for their papers “In Search For”.

Thank you for the great seminars we’ve had these last few weeks. And I have another couple of extra questions relevant to what we discussed two weeks ago (meeting 3). Here they are (I hope they make sense, and apologies if they don’t!):

1. We have discussed how ‘points within a CI correspond to distinct claims, and get different severity assignments’. That is, each point p in the Cl corresponds to a claim μ > p and each claim passes the test with the observed data with a different severity (please correct me if I am wrong!). But I was wondering whether it is also possible to calculate the severity of the claim: a < μ < b (where a is the lower limit and b is the upper limit of the 95% confidence interval)? In other words, is it possible to calculate the severity of the claim that a parameter of interest is within a 95% confidence interval that has both an upper and lower bound? It seems that it should be possible, but I don’t think you write anything about this in the book so I am not sure!

2. My second question concerns Jeffrey’s tail area criticism and your claim that your answer pins down an inferential justification for tail areas (which both Fisher and N-P failed to do). I mentioned this in the seminar a couple of weeks and you responded, but I am still having some trouble fully understanding how your answer pins down an inferential justification. So I’d thought I’d ask you again :)! I guess the best thing is for to me to write down what I think your answer is and then you can correct me if I am wrong!

-You write that the implication of Jeffrey’s tail area criticism is that ‘considering outcomes beyond d0 is to unfairly discredit H0 in the sense of finding more evidence against it than if only the actual outcome d0 is considered’. But given that the opposite is in fact true (i.e. considering the tail are makes it harder not easier, to find an outcome that is statistically significant) you reject this implication. And you further write ‘that this alone squashes the only sense in which this could be taken as a serious criticism of the tests’. But if this is your response to Jeffrey’s tail area criticism, and given that you reject Pearson’s reply because it points to a purely performance-orientated justification, I’m having trouble understanding why we shouldn’t reject this response for the same reason. The criticism is not really about whether it is easier or harder to reject H0 if H0 is true. The issue is why considering events that did not occur is relevant to whether or not we should rejects H0.

-But perhaps this is not really your response to Jeffrey’s criticism. Indeed you later write that ‘considering other possible outcomes that could have arisen is essential for assessing the test’s capabilities’. But I have trouble understanding this answer too. Because it seems to me that it is one thing to argue that the sample space matters post-data, and another to argue that ‘the non-occurrence of more deviant results is relevant’ (especially given that it seems to me that the only reason why those results are considered to be more ‘deviant’ than the one that has occurred is because of the very test we are trying to defend).

Margherita:

I think there are 2 points and you’ve described them well: answering this particular Jeffrey’s criticism is the first, and justifying the use of the sampling distribution the second.

On your first question, yes, you can report the SEV for the lower bound (mu > mu’), and it’s lower and lower for mu > mu” for mu” greater than mu’, until there’s actually a fairly good indication that mu < mu''', and an even better indication that mu 2, say, rather than z= 2. I think that reasoning is slipped into Jeffrey’s quip.

On your second question, are you not convinced that if even larger differences (than you observed) occur fairly frequently due to background (or chance assignment to treatment-control groups) alone, then it fails to be good evidence that it was NOT brought about by background variability alone?

I should note that despite my promoting an evidential and non-performance interpretation of error probabilities, the performance construal does have an important role in cases of screening. For example, anyone with this many neutralizing antibodies (to covid) or more, plus T-cells, etc counts as being immune–or so we hope they will soon find out. In other words, there are cases where falling into the rejection region leads to the same “action” or inference (as does falling into the non-rejection region)–just as Neyman-Pearson stipulated in the “behavioristic construal. They also had a third “inconclusive” region.

I hope we’ll further answer your query tomorrow and the following week (with power).